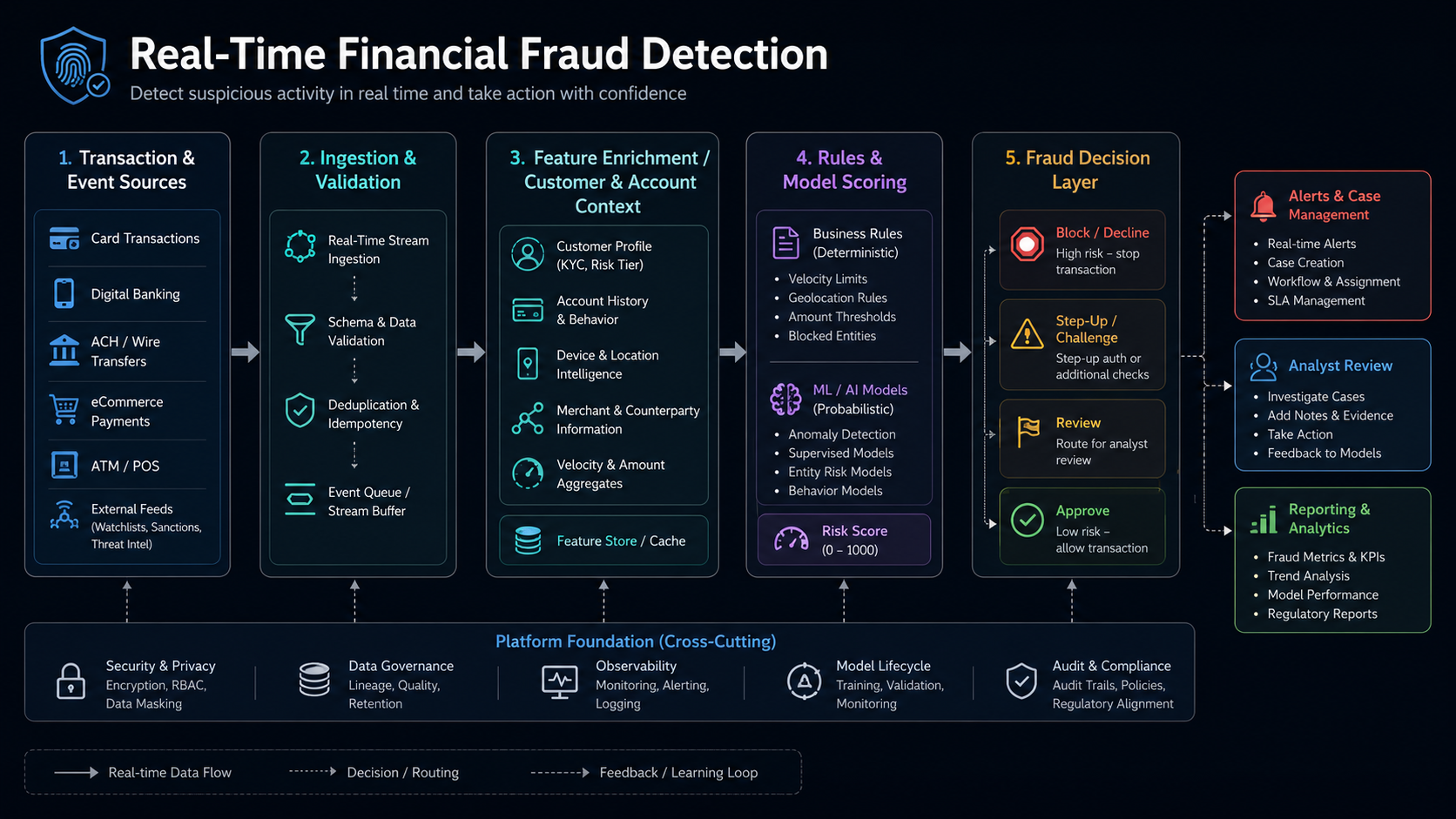

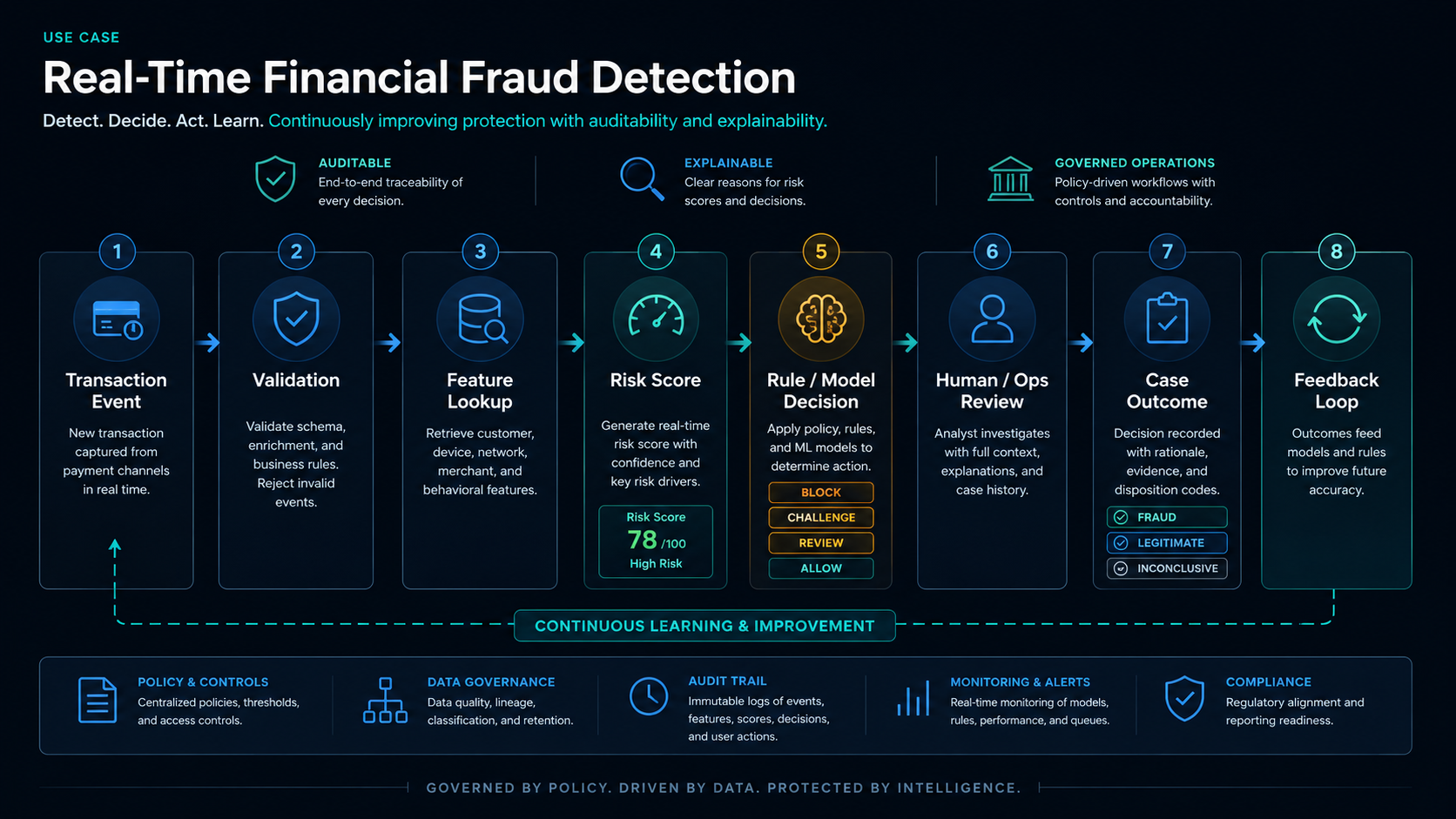

Executive situation

A risk-management company serving community banks and credit unions needed a scalable platform to handle increased data-processing scale. Real-time detection of fraudulent activity was central to helping its customers avoid the impact of financial crime.